Construction Economics and Building

Vol. 26, No. 1

2026

ARTICLES (PEER REVIEWED)

Formation of Collective Sensemaking Against Calamities in Global Construction Engineering Projects

Sarath Gunathilaka1, Jas Lall2, Amrit Sagoo1

1 Department of Construction Management, School of Architecture, Design and the Built Environment, Nottingham Trent University, Nottingham, United Kingdom

2 Carter Jonas, Two Snow Hill, Birmingham, United Kingdom

Corresponding author: Sarath Gunathilaka, sarath_gunathilaka@yahoo.com

DOI: https://doi.org/10.5130/j775ff06

Article History: Received 24/12/2024; Revised 04/07/2025; Accepted 20/08/2025; Published 27/02/2026

Citation: Gunathilaka, S., Lall, J., Sagoo, A. 2026. Formation of Collective Sensemaking Against Calamities in Global Construction Engineering Projects. Construction Economics and Building, 26:1, 1–22. https://doi.org/10.5130/j775ff06

Abstract

Collective sensemaking is key to the teams in global construction engineering projects (GCEPs) in order to establish resilience against encountered calamities. Both natural and man-made calamities that occur in these projects influence team performance adversely, and these teams need to develop resilience against them. Therefore, they need to engage in collective sense promptly to know what is going on around the work environment. However, making collective sense is a significant challenge for them because the team members do not have face-to-face interactions in physical proximity due to their virtual team setup. Therefore, knowing how collective sensemaking takes place can facilitate their identification of the best practices for its formation, but the components of collective sensemaking in these projects remain a knowledge gap in the literature. Thus, this paper conceptualizes the model of collective sensemaking with its components by reviewing literature and confirms its model fit through the results of a questionnaire survey among the team members in GCEPs. Both exploratory factor analysis and confirmatory factor analysis have been applied to confirm this model with its components as the main findings. As a contribution to practice, the teams in GCEPs can use this model to understand what aspects are to be prioritized for the formation of collective sensemaking to become resilient against calamities. These findings can also become an original theoretical contribution to the construction management research domain because future researchers can use the model and associated survey instruments to develop further theories and practices.

Keywords

Calamities; Collective Sensemaking; Global Construction Engineering Projects; Team Performance; Team Resilience

Introduction

Research evidence shows that making collective sense is important for project teams to become resilient against calamities (Boe-Lillegraven et al., 2023; Bolton and Landells, 2023). In particular, this is essential for the project teams in the global construction engineering projects (GCEPs), for example, the teams in global supply chain projects (Preuss et al., 2024). Collective sensemaking is defined in the literature review section. In general terms, collective sensemaking means making sense of an issue by all team members collectively at the team level to understand what is going on around the project work environment. In particular, collective sensemaking seems ubiquitous in work environments in crisis situations (Waller and Uitdewilligen, 2008), i.e., the calamities in the context of this paper.

Calamities are tremendous performance challenges for the teams in GCEPs nowadays, for example, the recent COVID-19 pandemic. According to the online Cambridge Dictionary, a calamity is a serious accident or a bad event that can cause damage or suffering to people. The United Nations accepted two calamity types, i.e., natural and man-made calamities (Green, 1993). Natural calamities consist of calamities such as blizzards (Cappucci, 2024), cyclones (Forbis et al., 2024), earthquakes (Qiu et al., 2024), floods (Dharmarathne et al., 2024), hurricanes (Comola et al., 2024), landslides (Svennevig et al., 2024), tornadoes (Forbis et al., 2024), tsunamis (Iwachido et al., 2024), volcanic eruptions (Bilbao et al., 2024), and wildfires (Ferreira et al., 2024). The other category, man-made calamities, is disasters caused by people. The online Cambridge Dictionary defines disaster as an event that results in great harm, damage, death, or serious difficulty, whereas a calamity is a serious accident or bad event that causes damage or suffering. Although both are interchangeable terms, the term “calamity” is typically a more severe and impactful event than the term “disaster” because a disaster can create a calamity and is generally a catastrophic event that causes widespread damage and disruption. This second category includes calamities such as arson (Ribeiro et al., 2024), biological or chemical threat (Reddy, 2024), civil disorders (Braha, 2024), crimes (Davies and Malik, 2024), cyber-attacks (Teichmann and Boticiu, 2024), terrorism (Green, 1993; Kanwar and Sharma, 2024), and war (Green, 1993; Wilson, 2024).

Due to technological developments around the world, the globalization of projects is increasingly popular in the modern global construction industry. As a result, the use of virtual GCEP teams to undertake construction works is a common practice today. The GCEP teams can face both natural and man-made calamities, and their performance can be adversely affected when a calamity occurs. Both categories of calamities are performance risks for the teams in GCEPs, but natural calamities are more severe because they come suddenly without any prior information. Natural calamities take place without human intervention and create performance challenges that are outside the control of the GCEP teams. However, there may be a possibility of receiving prior warning about man-made calamities; thus, these calamities may be able to be controlled, and appropriate resilient actions may be taken, but not always. The literature shows evidence on the adverse influence of calamities on project performance, society, or countries. For example, the COVID-19 pandemic influenced the construction project performance in the United Arab Emirates, resulting in schedule delays, disrupted cash flows, delayed approvals and inspections, and serious health issues for the construction workers (Sami Ur Rehman et al., 2022). Furthermore, Khodahemmati and Shahandashti (2020) confirmed the post-disaster cost movements of statistically significant increases in the cost of materials such as I-beams and channel beams following Hurricane Katrina in New Orleans, Louisiana. Such cost increases affect project team performance adversely. Furthermore, Lee et al. (2021) confirmed the influence of natural disasters on energy consumption globally, collecting data from 123 countries over the period of 1990–2015. Moreover, the Global Assessment Report on Disaster Risk Reduction (GAR) 2025 reports that disaster costs have grown to approximately USD202 billion annually, but the true costs of disasters are over USD2.3 trillion when cascading and ecosystem costs are taken into account.

These examples and statistics indicate that calamities, particularly natural calamities, can adversely affect the performance of GCEP teams, which need to build team resilience to maintain the accepted levels of performance (Júnior et al., 2023). The most important point here is that these teams need to form collective sensemaking on calamities immediately to become resilient because these global project teams work in virtual team setups. Generally, making collective sense at the team level is difficult in realistic terms because it poses team coordination requirements that can easily be broken down (Klein et al., 2010). This is more difficult in GCEPs due to the fact that team members reside and work in virtual team setups without engaging in face-to-face communications and interactions (Tavoletti and Taras, 2023). Therefore, making collective sense promptly is practically difficult, although this is needed for them to become resilient against calamities. As such, how collective sensemaking can take place in GCEPs against calamities becomes an essential research theme for the modern construction industry. In order to understand the approaches for enabling collective sensemaking, an understanding of the components of collective sensemaking is needed. Although collective sensemaking has been researched for several decades (e.g., Weick, 1993; Weick and Roberts, 1993; Bitencourt and Bonotto, 2010; Klein et al., 2010; Bietti et al., 2019; Knight et al., 2024), evidence for identifying its components for GCEPs is lacking in the literature, representing a significant knowledge gap. There were some noteworthy attempts to model collective sensemaking, but these models were not in the context of GCEPs, and thus, this knowledge gap is still a burning issue in practice. For example, Cristofaro (2022) introduced a model of a co-evolutionary framework of organizational sensemaking. Furthermore, Lübcke et al. (2025) developed a multimodal collective sensemaking model in extreme contexts with evidence from maritime search and rescue. Therefore, in order to understand how collective sensemaking is formed promptly in GCEPs against calamities, a model of collective sensemaking with its components is needed. Without knowing the components, it is difficult to decide whether to enable collective sensemaking.

As such, this paper aimed to fill this knowledge gap with the objective of developing a model of collective sensemaking with its components in the context of GCEPs. Accordingly, this paper is structured with the following sections: literature review, research methodology, results, discussion of findings, and conclusions. The findings will help the teams in GCEPs to understand the aspects that are to be prioritized to make collective sense against calamities in their projects and will also inform future researchers in developing further theories and practices in this research context.

Literature review

Theory and practice development on teamwork was initiated in the industry at the end of the 20th century. However, some aspects continue to evolve. Similarly, although the origin of collective sensemaking theory and practice development goes back to this era (e.g., Weick, 1993; Weick and Roberts, 1993; Weick, 1995), this continues to be an evolving research theme over the decades (e.g., Bitencourt and Bonotto, 2010; Klein et al., 2010; Bietti et al., 2019; Knight et al., 2024). As discussed in the introduction, this paper develops a model of collective sensemaking with its components for the context of GCEP teams. For this purpose, this section reviews the related literature in order to conceptualize collective sensemaking with its components as the first stage of the adopted research methodology.

Definition of collective sensemaking

The literature presents various definitions and views on collective sensemaking. These have been developed by many researchers in different contexts over time. This section reviews the literature to identify the characteristics of collective sensemaking in order to define it for the context of GCEPs.

An early view indicated that collective sensemaking was the making of sense in a team by assigning a meaning to an ongoing occurrence by all team members (Gioia and Chittipeddi, 1991). This is relevant to the GCEP teams to form collective sense, as they need to assign a meaning to what is going on in the project environment, particularly when a calamity is faced. However, sensemaking is an intangible human characteristic, and it is difficult to form at the team level due to the tendency to break the team coordination structure (e.g., Klein et al., 2010). Bitencourt and Bonotto (2010) stated that collective sensemaking was a process created in the mind of every individual in a team. The same view, but from a slightly different angle, was given by some authors stating that collective sensemaking was made with the contribution of all individuals in a team influencing each other (e.g., Weick and Roberts, 1993; Frohm, 2002; Boreham, 2004; Gray, 2007). However, Frohm (2002) confirmed empirically that collective sensemaking was not an aggregation of individual sensemaking, and, according to Klein et al. (2010), collective sensemaking was a macro-cognitive and collective team function that facilitated understanding the current situation and anticipating future uncertain and ambiguous conditions. However, collective sensemaking does not always occur through every team member’s contributions, and this is the team’s capacity to integrate and build up shared mental models to form collective knowledge on the prevailing situations (Akgün et al., 2012). Further views indicated that communication, reflection, and social cognition were needed to form collective sensemaking (Talat and Riaz, 2020). Varanasi et al. (2023) extended this view, stating that these were to be made at a particular point in time and space. The teams should also have analytical capacity, synthesizing and interpreting the situation to share appropriate narratives for building up social cognition to make collective sense (Neill et al., 2007; Klein et al., 2010; Akgün et al., 2012). Some authors also identified collective sensemaking as a goal-oriented collective activity (e.g., Gioia and Chittipeddi, 1991; Bietti et al., 2019). Taken together, collective sensemaking is defined as a goal-oriented ongoing process in a team that involves exchanging of provisional understanding or connecting of cues and trying to agree on consensual interpretations through information gathering and re-interpretation of narratives, and/or a course of analytical actions that are developed through shared mental models for creating knowledge to identify what is going to occur at a particular point in time and space (Gioia and Chittipeddi, 1991; Weick and Roberts, 1993; Frohm, 2002; Boreham, 2004; Gray, 2007; Neill et al., 2007; Bitencourt and Bonotto, 2010; Klein et al., 2010; Akgün et al., 2012; Bietti t al., 2019; Talat and Riaz, 2020; Varanasi et al., 2023).

Components of collective sensemaking

Creating collective sensemaking is difficult in the team environment because it must be created in the minds of every individual who is influenced by the perceptions of others (Weick, 1995; Frohm, 2002; Bitencourt and Bonotto, 2010). This is significantly difficult in GCEPs due to their virtual team setups. Although the components of collective sensemaking for these teams are not directly available, the literature provides compelling evidence to identify the components of collective sensemaking for the project teams in any project context (see Table 1).

| No. | View(s) and author(s) | Component |

|---|---|---|

| 1 | Collective sensemaking takes place through: - storytelling (Claidiere and Sperber, 2010; Bietti et al., 2019; Bolton and Landells, 2023); - two or more people communicate together and seek to synthesize and share their individual thoughts, intentions, and feelings (Mokline and Ben Abdallah, 2022); - high-level information flows through communications (Fellows and Liu, 2017); - having a language for the communication (Weick et al., 2005); - resolving contradictions through narrating and spontaneous discussions between team members to exchange feelings (Boreham, 2004); - contacting with outsiders such as customers and suppliers (Edmondson and Nembhard, 2009; Akgün et al., 2012); - transferring actions and rules from one member to another (Waller and Uitdewilligen, 2008) . | Communication |

| 2 | Collective sensemaking takes place through: - orchestrating narratives to identify a cause (Sharma and Grant, 2011; Bietti et al., 2019); - developing a shared meaning to understand the situation by gathering and interpreting information (Christiansen and Varnes, 2009; Akgün et al., 2012); - creating, updating, and rewriting the story of what has occurred and maximally aligning the current plausible understanding to the available information (Weick et al., 2005; Waller and Uitdewilligen, 2008); - interaction among individuals and establishing a synthesis of thoughts, meanings, and intentions (Bitencourt and Bonotto, 2010). | Information gathering |

| 3 | Collective sensemaking takes place through the abilities of: - assigning a meaning to an ongoing occurrence by all team members (Gioia and Chittipeddi, 1991); - social negotiation and interpreting messages to develop understanding of the work environment and reach consensus on their actions (Neill et al., 2007); - developing a shared meaning to understand the situation by gathering and interpreting information (Christiansen and Varnes, 2009; Akgün et al., 2012); - labelling, indexing, sorting, abstracting, and coding of information to give meaning (Akgün et al., 2012); - interpreting messages to develop understanding of the work environment and reach consensus on their actions (Neill et al., 2007); - interpreting ambiguous, complex, or unexpected events (Turner et al., 2023). | Interpretation |

| 4 | Collective sensemaking takes place through the abilities of: - labelling, indexing, sorting, abstracting, and coding of information to give meaning (Akgün et al., 2012); - verifying findings by comparing with similar past situations (Akgün et al., 2012); - reaching a level of symbolic reality of pure meaning on the prevailing situation (Bitencourt and Bonotto, 2010); - interaction among individuals and establishing a synthesis of thoughts, meanings, and intentions (Bitencourt and Bonotto, 2010); - building shared mental models through experimental actions (Boreham, 2004; Akgün et al., 2012; Sund, 2024); - analyzing the atmospheric dynamics (Knight et al., 2024). | Analytical capacity |

| 5 | Formation of collective sensemaking needs: - goal orientation as a collective activity (Gioia and Chittipeddi, 1991; Bietti et al., 2019); - goal orientation to shape the way of learning (Chadwick and Raver, 2015); - goal-oriented care (Huybrechts et al., 2023). | Goal orientation |

| 6 | Formation of collective sensemaking needs: - educational benchmarking for algorithmic sensemaking (Obreja, 2024); - creating measurable benchmarks for values and social behaviors (Arnold, 2021); - using benchmarking system for making sense (Charbonneau, 2010) - benchmarking as a model for knowledge creation (Kleemola, 2005) . | Benchmarking |

As shown in Table 1, communication is the first component of collective sensemaking, and it facilitates information exchange for collective sensemaking to take place (Neill et al., 2007). This is the first activity to take place for the formation of collective sensemaking and can be carried out through methods such as online meetings (Chandler and Wallace, 2009) and the Internet of Things (IOT) for sending instant messages (Annamalai et al., 2024). Communication is achieved in two ways, i.e., internal and external communication. The purpose of both internal and external communication is to gather the required information promptly for the teams to make collective sense, and thus, the second associated component of collective sensemaking is identified as information gathering (see Table 1). Such information gathering can be undertaken using different ways, such as listening to the storytelling and narratives on the causes of calamities (Sharma and Grant, 2011; Bietti et al., 2019) and interaction among individuals to establish a synthesis of thoughts (Bitencourt and Bonotto, 2010). The information collected through communication is to be interpreted accurately and promptly in order to make collective sense, and thus, the third component of collective sensemaking is identified as interpretation (see Table 1). Interpretation involves the development and application of different methods to comprehend the meaning of information (Daft and Weick, 1984). Interpretation entails fitting the collected information into some structured form to understand it and then make the appropriate responses (Daft and Weick, 1984; Fellows and Liu, 2017). Interpretation also shapes perceptions of the situation by directing what information is received, how it is interpreted, and how it is utilized (Neill et al., 2007; Akgün et al., 2012). The fourth component of collective sensemaking is the analytical capacity (see Table 1). The idea is that the teams should have the analytical capacity to interpret the gathered information through communication. Analytical capacity is the ability to simultaneously incorporate multiple perspectives, assigning meanings to the prevailing situations to facilitate the team’s decision making to make sense (Neill et al., 2007). The analytical capacity enables the GCEP teams to interpret the danger of calamities instantly and take immediate actions (Daft and Weick, 1984; Weick, 1995; Weick et al., 2005). In addition to these four direct components of collective sensemaking, there are two important aspects that should take part in the process of making collective sense. These are identified as the fifth and sixth components of collective sensemaking, i.e., goal orientation and benchmarking, respectively (see Table 1). As discussed above, the teams should make collective sense to become resilient and maintain the required level of performance. This is the goal of a team, and thus, goal orientation becomes part of making collective sense, as presented in the literature (e.g., Gioia and Chittipeddi, 1991; Bietti et al., 2019). Similarly, the project teams use their prior experience on similar situations through benchmarking to make collective sense in order to detect the danger of calamities. The literature shows some examples, such as using a benchmarking system for making sense (Charbonneau, 2010), as well as benchmarking as a model for knowledge creation to make collective sense (Kleemola, 2005).

In summary, the literature provides noteworthy evidence to identify the components of collective sensemaking as communication, information gathering, interpretation, analytical capacity, goal orientation, and benchmarking for any project in general. However, there is a lack of research evidence to decide whether these components are valid for the formation of collective sensemaking in GCEPs. The rest of this paper is focused on investigating the model of collective sensemaking for this project team context with the help of the reviewed literature.

Research methodology

Research design

The design of a research study depends on the research questions that are to be investigated (Bryman, 2015; Bryman and Bell, 2015; Saunders et al., 2016). What the components of collective sensemaking are for GCEPs is the research question related to this study. There were some noteworthy contributions in the literature to identify the components of collective sensemaking in the general project context, as discussed in the literature review section. Thus, a systematic, structured, and in-depth literature review was conducted at the first stage to conceptualize collective sensemaking with the identification of its components. The literature search was conducted through several search engines and databases such as Google Scholar, Scopus, ScienceDirect, Springer, and Wiley. The search criteria included phrases related to collective sensemaking of project team members in GCEPs, such as “team collective sensemaking”, “collective sensemaking in GCEPs”, and “components of collective sensemaking”. The collection of published work for this study was undertaken from various sources at the initial stage to obtain an overall view of the research context, but only journal papers were used to carry out the critical and systematic literature review to identify the components of collective sensemaking.

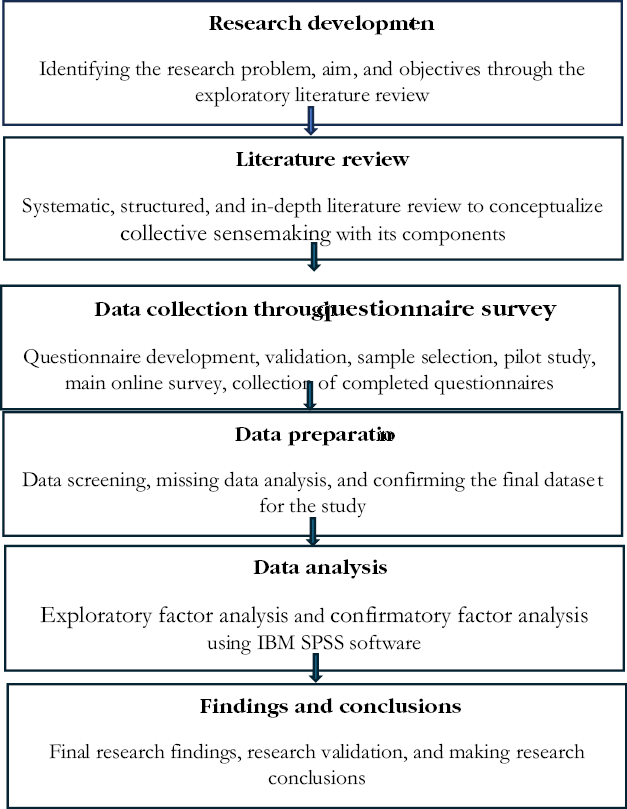

The research design was carried out following two philosophical positions. The first is the research ontological philosophical position that is concerned with the nature of reality (Saunders et al., 2016). This philosophy refers to assumptions about the world (Corbin and Strauss, 2008), assumptions made about the nature of reality (Mark et al., 1991), and the nature of reality and its characteristics (Creswell, 2012) when researchers develop the research concepts. In general, ontology raises questions regarding the assumptions that researchers have about the way the world operates and people’s commitment to particular views (Saunders et al., 2016). Accordingly, the questions about the assumptions made for conceptualizing collective sensemaking with its components through the literature review by the researcher, focusing on how project team members work, illustrate the ontological philosophical position of this study. However, due to the requirement of testing the model fit of collective sensemaking with its components to the GCEP team context, the literature alone was insufficient to answer this question, and, thus, it was deemed more appropriate to adopt a quantitative research approach (Creswell, 2014; Saunders et al., 2016). Accordingly, this study was grounded on the belief that complex interactions among the various team members in GCEPs could be explored through a systematic and simplified approach according to the second research position grounded in the epistemological research philosophy. This research philosophy is concerned with what constitutes acceptable knowledge in a field of study or discipline (Saunders et al., 2016) and the question of what is or should be regarded as acceptable knowledge in a discipline (Bryman, 2015; Bryman and Bell, 2015). The most suitable perspective under the epistemological research philosophy for this study was positivism, which views acceptable knowledge as constituting only phenomena that have direct observable variables and can be quantifiable (Bryman, 2015; Bryman and Bell, 2015). Accordingly, a positivist perspective was adopted, assuming that acceptable knowledge on such team member interactions was constituted through direct observable variables that could be quantifiable (Bryman, 2015; Bryman and Bell, 2015). Thus, the second stage of the research design was designed to conduct a quantitative survey among the team members in GCEPs in order to test the model fit of collective sensemaking with its components. The adopted research methodology process is shown in Figure 1.

Figure 1. Research methodology process

Development of the questionnaire

The first step of developing the questionnaire was selecting the survey instrument. The authors investigated whether a compatible survey instrument was available in the literature to develop the questionnaire at the first step. This is a general practice in quantitative research. The existing survey instrument of team sensemaking developed by Akgün et al. (2012) was deemed suitable, and this was adopted due to two compatibilities that are matched with the scope of the study investigation. The first was the same scope of study on team sensemaking in both studies, although the term collective sensemaking was used in the current study. The second compatibility was the alignment of the components of team/collective sensemaking in both studies, although slightly different (albeit similar) labels were used. Akgün et al. (2012) developed this survey instrument with a 31-item scale by adapting different components/variables from other studies: internal communication (Neill et al., 2007; Park et al., 2009), external communication (Chang and Cho, 2008), information gathering (Moorman, 1995), information classification (Akgün et al., 2006), building shared mental models (Lynn et al., 2000), and experimental action (Bogner and Barr, 2000). These components were matched with the components of collective sensemaking in the current study, although a few labels were slightly different (see Table 1). For example, the current study used communication representing both internal and external communications. Furthermore, information gathering is common to both studies. Overall, the focus of both studies is on collective/team sensemaking, and thus, they are matched contextually regardless of minor differences in the terms used for components. Therefore, the survey instrument of Akgün et al. (2012) was adopted by editing appropriate texts to match the GCEP teams.

Using a survey instrument that is free from bias with validated psychometric properties is very important in questionnaire surveys in order to minimize the risk (Field, 2009). For this purpose, the survey instrument was checked using three theoretical methods applied in quantitative research (Field, 2009). The existing results of using this survey instrument in the original study or in other literature were used to apply these validation checks. The first method was dropping the item scales with loading factors below 0.5 using the available exploratory factor analysis (EFA) results. Most researchers use 0.3 or 0.4 as the threshold limit for the item reduction (Field, 2009). However, 0.5 was used as the threshold limit in this study in order to have stronger results due to the contextual difference of the project sectors of this current study and that of Akgün et al. (2012). The second test was dropping components where Cronbach’s alpha was below 0.7. The group of items with a negligible percentage of loading compared to the total item scale was reduced according to the third method. However, none of the items could be deduced using these methods from the existing results in the literature, so all 31 items of Akgün et al. (2012) were used. These were employed with a 5-point Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree) in the questionnaire. The data collection consisted of team-level views, perceptions, and ideas on collective sensemaking in GCEPs from individual team members. Therefore, a 5-point Likert scale was used because it provided the balance between simplicity and the details of gathering the views, opinions, perceptions, and attitudes of participants. Applying the survey instrument with a 5-point Likert scale also makes it easier for the respondents to understand the questionnaire and complete the survey. The final questionnaire also consisted of some demographic information of the respondents, and associated teams and projects.

The next step in the survey was the validation of the questionnaire before sending it out to the respondents. One of the lead researchers proofread the document to check the content and face validity. Thereafter, a pilot survey was conducted to identify potential problems, check mistakes and errors, and refine the research process before launching the main survey. Slight amendments, in particular correcting grammar and English, were made with the pilot survey feedback received from six participants from academia and industry.

Selection of the questionnaire survey sample

In order to have meaningful results, the sample selection is significantly important in quantitative research (Field, 2009). According to the research question, the questionnaire survey needed to be carried out among the team members in GCEPs. Accordingly, data were collected from randomly selected individuals in GCEPs following the simple random sampling method. For this purpose, two selection criteria were used to decide the appropriate GCEP teams. The first criterion was the requirement of team member geographical dispersion in two or more countries in order to make sure that they were working in virtual team setups. There may be a combination of team members from different organizations in such virtual teams. Therefore, only the teams that consisted of team members from a single organization were included in order to have a specific sample as the second selection criterion. There were no restrictions on the nature, sector, and value of the projects, as well as on the functions and sizes of the teams, because the research question did not depend on these factors. In some situations, such GCEP teams work on different projects at the same time. Thus, in order to have meaningful data, the instructions were given at the beginning of the survey to complete the questionnaire in terms of a single GCEP to which the respondents were linked.

The most important requirement of a questionnaire survey is the selection of the appropriate sample size. Typically, the qualitative research studies focus on in-depth studies using relatively small purposeful samples, whereas the quantitative studies depend on larger random samples (Patton, 1990). The sample size is very important in quantitative research because the strength of the output depends on it (Field, 2009). Accordingly, the sample size was decided in terms of the data analysis methods that are described below. In order to match both EFA and confirmatory factor analysis (CFA) methods used in the data analysis, the minimum threshold of 150 responses was finalized (Guadagnoli and Velicer, 1988; Field, 2009). Recruitment of respondents was supplemented with snowball sampling (Naderifar et al., 2017) due to the impossibility of finding a single database of GCEPs. As such, the participants were selected from anywhere in the world through known contacts and networking.

Results

The data analysis strategy

The data analysis strategy consisted of three stages. Data preparation was performed at the first stage with data screening. Missing data analysis was conducted manually as well as using the missing data analysis function in the IBM SPSS Statistics 28 software (Hair et al., 2010). The dataset prepared after data imputation through missing data analysis was used to analyze the data in stage 2.

The second stage of data analysis involved applying the EFA. This analysis examines the underlying patterns and relationships of a large number of variables in a dataset to determine whether the variables can be condensed or summarized in a smaller set of factors or components (Field, 2009). As collective sensemaking was measured by adapting the 31-item scale from literature relating to a general project, the EFA was used to help decide the compatibility of the survey instrument with the GCEP context. The other purpose of applying the EFA was for item reduction. The EFA was performed using principal component analysis (PCA) with Varimax rotation in the IBM SPSS software. This test is being used in quantitative research for extracting important information from a large dataset by means of smaller sets of new orthogonal variables called principal components. These patterns can be observed using scatter diagrams (Abdi and Williams, 2010). Accordingly, the PCA was used for reducing cases-by-variables in a data table into principal components (Greenacre et al., 2022). Most researchers apply factor loading using the Varimax rotation to adjust the components in PCA (Corner, 2009). The same method was suitable for this study. However, in order to reduce the risk and produce meaningful results, a few statistical validation checks were conducted. The first check was applying the 0.5 threshold limit of Kaiser–Meyer–Olkin (KMO) to measure sampling adequacy (Field, 2009; Napitupulu et al., 2017). The second check was applying the composite reliability of Cronbach’s alpha of 0.7 as a commonly used threshold limit in quantitative research. For the third validation check, a 0.5 communality threshold limit was used for the item reduction as mentioned above (Field, 2009). Generally, the communalities in EFA provide information on how much variance the variables have in common or share and sometimes indicate how highly predictable variables are from one another (Pruzek, 2005). The higher the communality value, the more the extracted factors will explain the variance of the item (Tavakol and Wetzel, 2020). Therefore, the 0.5 threshold limit provided strong results.

The third and most important stage of data analysis was conducting the CFA using the IBM SPSS Amos 26 Graphics software. This analysis was performed to test the model fit of collective sensemaking with its components. The CFA is a method of uncovering the latent structure between an observed variable and hypothesized underlying constructs (Garson, 2009). This often involves detecting which variables load onto which factors (Kim and Mueller, 1978). Accordingly, CFA was performed to assess the goodness of model fit of collective sensemaking with its components.

Details of survey responses

As mentioned above, the survey was conducted among the team members in GCEPs. By the time the survey was closed, 165 responses were received. However, the final sample for the data analysis was 163, after dropping items through completing data preparation and validation checks. The details of the respondents are set out in Table 2.

Data preparation

The data preparation started with data screening in an appropriate data table of 165 responses. Thereafter, missing data analysis was conducted. The first step in the missing data analysis was manual checking of survey responses to detect mismatches. The second step of missing data analysis was conducted using the Missing Value Analysis option in SPSS (cf. Hair et al., 2010). The purpose of this test was to determine whether the remaining pattern of missing data after deleting the cases was missing completely at random (MCAR). The test confirmed that the missing data pattern was MCAR because Little’s overall test of missing data was not significant (Little’s MCAR test: chi-square = 8,351.938, df = 9296, Sig. = 1.000). Two responses were dropped due to missing several pieces of key information or other incompatibilities during the data preparation. The remaining sample for the EFA was 163 responses.

Exploratory factor analysis

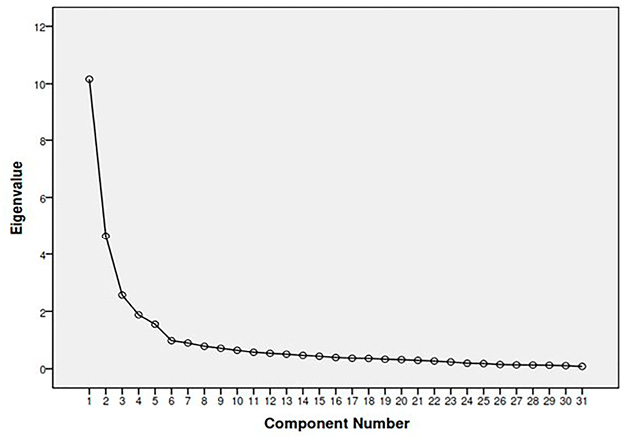

In the second stage of data analysis, the EFA was conducted using PCA with Varimax rotation in the IBM SPSS software. The 31-item scale developed by Akgün et al. (2012) was used for this purpose. The KMO measure of sampling adequacy of collective sensemaking was 0.882, and this fulfilled the threshold limit of 0.5 (Field, 2009). This result indicated that the selected sample was sufficient to conduct the EFA and draw valid conclusions. During the EFA, the 31-item scale of Akgün et al. (2012) was loaded to three components meaningfully, but only the 19-item scales could be accepted, as described below (see Table 3). There were 12 variables that had not been loaded properly above the threshold limit of 0.5 and/or loaded to more than one component unmeaningfully, and they were dropped (Field, 2009). Thereafter, the final survey instrument of collective sensemaking in the GCEP team context consisted of 19-item scales (see Table 3). Three components of collective sensemaking were identified according to the scree plot (see Figure 2). The results of total variance explained using the threshold limit of Eigenvalue 1 also indicated three components giving Eigenvalues of 10.159 (32.8%), 4.646 (15%), and 2.565 (8.3%). According to the contexts of the related item scales, three components were identified as communication and information gathering (component 1), interpretive and analytical capacity (component 2), and goal orientation and benchmarking (component 3).

Figure 2. PCA scree plot of collective sensemaking. PCA, principal component analysis

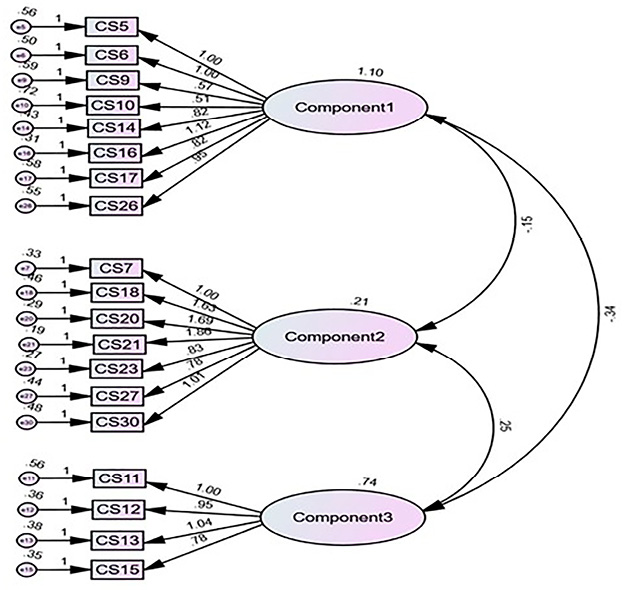

Confirmatory factor analysis

In the data analysis stage 3, the CFA was performed using the IBM SPSS Amos 26 Graphics software to test the model fit of collective sensemaking with its components. The test was carried out using 19-item scales of collective sensemaking that consisted of eight-, seven-, and four-item scales for components 1, 2, and 3, respectively (see Table 3). Although using the same dataset for both EFA and CFA tests is discouraged in research, this can be adopted in specific circumstances, such as when the requirement is to test a model derived from EFA. Prior research evidence shows the application of both analyses using the same dataset (e.g., Choi and You, 2017; Orçan, 2018). By splitting the dataset into two parts, the current study used different sub-sets of the same dataset, i.e., a first sub-set of 31-item scales for EFA and a second sub-set of 19-item scales for the CFA. This is because the EFA is applied to explore and refine the factor structure, identifying the collective sensemaking model with its components, whereas the CFA validates the refined structure of the model fit. Furthermore, several research validation activities have been undertaken under both tests in order to ensure that valid findings and conclusions can be drawn.

Note. EFA, exploratory factor analysis.

The model fit of collective sensemaking with these components was tested through this analysis. The results of CFA and the path diagram are shown in Table 4 and Figure 3, respectively. According to the results, the degree of freedom was 149. This value was above zero (df > 0), which gave a good sign that the model could be estimated and that model fit could be assessed (Hu and Bentler, 1999; Nye, 2023). Thereafter, as discussed in the data analysis strategy, three indices were used to decide the model fit without limiting it to a single index. As shown in Table 4, the results of the comparative fit index (CFI) and Tucker–Lewis index (TLI) were 0.8 and 0.771, respectively. Although these indices did not exactly fulfill the cutoff limits, they were reasonably satisfactory, showing slightly below the cutoff limit of ≥0.95 or relaxed to 0.90 (Hu and Bentler, 1999; Nye, 2023). Similarly, the results of the root mean square error of approximation (RMSEA) were also marginally satisfactory, showing a value of 0.129, and this approximately fulfilled the cutoff limit of ≤0.06 or relaxed to ≤0.1 (Browne and Cudeck, 1993; Hu and Bentler, 1999; Nye, 2023). Because the CFA results depend on many factors, as discussed in the discussion of the findings below, there is a possibility of confirming the model fit of collective sensemaking according to these approximate fulfillments of model fit indices. This issue is further addressed in the discussion of the findings.

Note. CFA, confirmatory factor analysis.

Figure 3. Path diagram of CFA. CFA, confirmatory factor analysis

Discussion of findings

This study confirmed the model fit of collective sensemaking for GCEPs with its three components of communication and information gathering, interpretive and analytical capacity, and goal orientation and benchmarking. These findings add value to the noteworthy contributions of previous authors from the literature review that have been used for conceptualizing collective sensemaking with its six components of communication, information gathering, interpretation, analytical capacity, goal orientation, and benchmarking (see Table 1). The contributions of these authors are meaningful because they helped to identify the components of collective sensemaking for any project. Furthermore, a suitable survey instrument could also be identified in the literature, giving credibility to the original works of Akgün et al. (2012). Accordingly, the final model of collective sensemaking for the context of GCEPs could be finalized.

Although collective sensemaking could be confirmed with three components to the GCEP team context, the CFA results gave slightly contradictory results regarding the model fit indices (see Table 4). Generally, confirming the model fit of models using CFA can be fragile because relying solely on statistical model fit indices can be misleading. Accordingly, model fit indices may not confirm whether even a good model fit is present. However, the strong indication was the result of df > 0 by confirming that the model could be estimated and the model fit could be assessed. The results at least fulfilled the model fit indices approximately. This approximation was accepted due to the fact that the model fit indices in CFA depend on many other factors that could give contradictory results. For example, χ2 = 000 (<0.05) was significant without confirming the model fit. Generally, the chi-square is not considered a very useful model fit index by most researchers due to its dependency on many factors, such as sample size, model size and complexity, distribution of variables, omitting variables, and distributional assumptions (Hayduk et al., 2007). Accordingly, the chi-square could not be taken as a good indication to decide the model fit of collective sensemaking because the sample size of 163 is relatively small, although slightly above the threshold limit of 150. This is similar to most model fit indices. Accordingly, both the CFI and TLI were also not exactly within the accepted cutoff limits, but a good indication was that both were approximately toward the limit (see Table 4). Both the CFI and TLI also depend on the sample size but are less sensitive compared to the chi-square. Generally, the CFI and TLI values range from 0 to 1, with higher values indicating a better model fit. This phenomenon can be applied to confirm the model fit. However, the RMSEA approximately fulfilled the relaxed cutoff limit, confirming the model fit (see Table 4). Therefore, there is a possibility to confirm the model fit of collective sensemaking with these assumptions and approximations. The contradictory CFA results may be due to an overlap between the variables of these components or due to other reasons, such as sample size, which can be further investigated in future research.

As shown in Figure 3, the findings of this study as the outcome of CFA confirmed the 19-item scales for measuring collective sensemaking in GCEP teams together with confirming eight-, seven-, and four-item scales for its aforementioned components 1, 2, and 3, respectively, giving credibility to the authors who contributed to developing this survey instrument (Moorman, 1995; Bogner and Barr, 2000; Lynn et al., 2000: Akgün et al., 2006; Neill et al., 2007; Chang and Cho, 2008; Park et al., 2009; Akgün et al., 2012). Confirming these components with associated variables (see Table 3 and Figure 3) represents significant theoretical and practical contributions discussed in the conclusions.

Conclusions

This paper developed a model of collective sensemaking for the GCEP context by achieving its aim and objective. The aim was to address the lack of a suitable model for GCEPs, and the objective was to develop such a model. By fulfilling these, the paper successfully created a final model comprising three components: communication and information gathering, interpretive and analytical capacity, and goal orientation and benchmarking. Although some contradictory CFA results were found when assessing the model fit—detailed in the discussion of the findings—the components of collective sensemaking were meaningfully loaded through EFA, allowing the model to be completed. These findings provide clear guidance on the components necessary for creating collective sense in GCEPs.

Through these findings, the originality of theoretical and practical contributions in this paper is noteworthy, making it one of the first studies to develop a model of collective sensemaking with its components for the context of GCEPs. The theoretical contribution is the confirmation of the collective sensemaking model with its three components in the GCEP context. This theoretical contribution includes the confirmation of 19-item scales to measure collective sensemaking as well as eight-, seven-, and four-item scales to measure its components of communication and information gathering, interpretive and analytical capacity, and goal orientation and benchmarking. In terms of methodological standpoint, future research can extend these theoretical contributions using these survey instruments in their studies to develop further theories and practices. This paper also provides an original contribution to the practical GCEP sector, as the teams in these projects can understand what aspects are to be prioritized in order to form collective sense to face calamities while residing and working in virtual team setups across the world. Splitting the 19-item scale variables associated with collective sensemaking into its components is helpful for these project teams to identify priorities. Accordingly, evidence of what components and variables make collective sensemaking in GCEPs is no longer anecdotal.

Nevertheless, this study has two limitations. The first is the unavailability of a single source/database to sample the GCEP teams in order to conduct the questionnaire survey. Extra care was taken to distribute the survey among the teams in GCEPs across the world to minimize the effect of this limitation. For this purpose, random sampling was followed with the help of international contacts of the researchers, professional bodies, and the global collaborative organizations as much as possible to collect a sample from which the findings can be generalized globally. The second limitation is the reasonably small sample size for the survey, although this is above the accepted threshold limit. Therefore, extra care was taken to carry out appropriate statistical validation tests at each stage of data analysis. However, slightly unusual results were found in terms of deciding the model fit indices in the CFA. This may be due to other influences, such as a small sample size or other factors that are not within the context of this study. Therefore, a recommendation is made to explore these influences on the CFA model fit indices in future studies.

References

Abdi, H. & Williams, L.J. (2010). Principal component analysis. Wiley Interdisciplinary Reviews: Computational Statistics, 2(4), 433-459. https://doi.org/10.1002/wics.101

Akgün, A.E., Keskin, H., Lynn, G. & Dogan, D. (2012). Antecedents and consequences of team sensemaking capability in product development projects. R&D Management, 42(5), 473-493. https://doi.org/10.1111/j.1467-9310.2012.00696.x

Akgün, A.E., Lynn, G.S., & Yılmaz, C. (2006). Learning process in new product development teams and effects on product success. Industrial Marketing Management, 35, 210-224. https://doi.org/10.1016/j.indmarman.2005.02.005

Annamalai, A., Poonia, R.C. & Shanmugasundaram, S. (2024). Internet of things-based virtual private social networks on a text messaging strategy on mobile platforms. International Journal of Electronic Security and Digital Forensics, 16(1), 40-62. https://doi.org/10.1504/IJESDF.2024.136021

Arnold, W.H. (2021). Sensemaking to define organizational values and assess organizational performance, Doctoral thesis, Peabody College of Vanderbilt University in Nashville, Tennessee, USA.

Bietti, L.M., Tilston, O. & Bangerter, A. (2019). Storytelling as adaptive collective sensemaking. Topics in Cognitive Science, 11(4), 710-732. https://doi.org/10.1111/tops.12358

Bilbao, R., Ortega, P., Swingedouw, D., Hermanson, L., Athanasiadis, P., Eade, R., Devilliers, M., Doblas-Reyes, F., Dunstone, N., Ho, A.C. & Merryfield, W. (2024). Impact of volcanic eruptions on CMIP6 decadal predictions: a multi-model analysis. Earth System Dynamics, 15(2), 501-525. https://doi.org/10.5194/esd-15-501-2024

Bitencourt, C.C. & Bonotto, F. (2010). The emergence of collective competence in a Brazilian Petrochemical Company. Management Revue, 21(2), 174-192. https://doi.org/10.5771/0935-9915-2010-2-174

Boe‐Lillegraven, S., Georgallis, P. & Kolk, A. (2023). Sea change? Sensemaking, firm reactions, and community resilience following climate disasters. Journal of Management Studies. https://doi.org/10.1111/joms.12998

Bogner, W.C. & Barr, P.S. (2000). Making sense in hypercompetitive environments. Organization Science, 11(2), 212-226. https://doi.org/10.1287/orsc.11.2.212.12511

Bolton, D. & Landells, T. (2023). Storytelling, sensemaking and sustainability agendas. In The Routledge Companion to the Future of Management Research, Routledge, 253-278. https://doi.org/10.4324/9781003225508-19

Boreham, N. (2004). A theory of collective competence: challenging the neo‐liberal individualisation of performance at work. British Journal of Educational Studies, 52(1), 5-17. https://doi.org/10.1111/j.1467-8527.2004.00251.x

Braha, D. (2024). Phase transitions of civil unrest across countries and time. npj Complexity, 1(1), 1. https://doi.org/10.1038/s44260-024-00001-3

Browne, M.W. & Cudeck, R. (1993). Alternative ways of assessing fit. In Bollen KA, Long JS (Eds.). Testing structural equation models. Sage, Thousand Oaks, CA, 136-162.

Bryman, A. (2015). Social research methods. Oxford university press, UK.

Bryman, A. & Bell, E. (2015). Business research methods. Oxford University Press, UK.

Cappucci, M. (2024). New storm to bring blizzard and more tornadoes: city-by-city forecasts. The Washington Post.

Chadwick, I.C. & Raver, J.L. (2015). Motivating organizations to learn: goal orientation and its influence on organizational learning. Journal of Management, 41(3), 957-986. https://doi.org/10.1177/0149206312443558

Chandler, R. & Wallace, J. (2009). The role of videoconferencing in crisis and emergency management. Journal of Business Continuity & Emergency Planning, 3(2), 161-178. https://doi.org/10.69554/YQHG2151

Chang, D.R. & Cho, H. (2008). Organizational memory influences new product success, Journal of Business Research, 61 (1), 13–23. https://doi.org/10.1016/j.jbusres.2006.05.005

Charbonneau, É. (2010). Use and sensemaking of performance measurement information by local government managers: the case of Quebec’s municipal benchmarking system, Doctoral thesis, Rutgers University-Graduate School-Newark.

Choi, C.H. & You, Y.Y. (2017). The study on the comparative analysis of EFA and CFA. Journal of Digital Convergence, 15(10), 103-111.

Christiansen, J. K. & Varnes, C. J. (2009). Formal rules in product development: sensemaking of structured approaches, Journal of Product Innovation Management, 26(5), 502-519. https://doi.org/10.1111/j.1540-5885.2009.00677.x

Claidiere, N. & perber, D. (2010). The natural selection of fidelity in social learning. Communicative and Integrative Biology, 3, 350-351. https://doi.org/10.4161/cib.3.4.11829

Comola, F., Märtl, B., Paul, H., Bruns, C. & Sapelza, K. (2024). Impacts of global warming on hurricane-driven insurance losses in the United States. https://doi.org/10.31223/X5GQ4H

Corbin, J. & Strauss, A. (2008). Basics of qualitative research: techniques and procedures for developing grounded theory, Sage. https://doi.org/10.4135/9781452230153

Corner, S., 2009. Choosing the right type of rotation in PCA and EFA. JALT Testing & Evaluation SIG Newsletter, 13(3), pp.20-25.

Creswell, J. W. (2012). Qualitative inquiry and research design: choosing among five approaches. Lincoln: Sage.

Creswell, J. W. (2014). Research design: qualitative, quantitative, and mixed methods approaches, 4th ed, Sage publications.

Cristofaro, M. (2022). Organizational sensemaking: a systematic review and a co-evolutionary model. European Management Journal, 40(3), 393-405. https://doi.org/10.1016/j.emj.2021.07.003

Daft, R.L. & Weick, K.E. (1984). Toward a model of organizations as interpretation systems. Academy of Management Review, 9(2), 284-295. https://doi.org/10.2307/258441

Davies, J. and Malik, H ( 2024). The organization of crime and harm in the construction industry. Taylor & Francis. https://doi.org/10.4324/9781003167990

Dharmarathne, G., Waduge, A.O., Bogahawaththa, M., Rathnayake, U. & Meddage, D.P.P. ( 2024). Adapting cities to the surge: a comprehensive review of climate-induced urban flooding. Results in Engineering, 102123. https://doi.org/10.1016/j.rineng.2024.102123

Edmondson, A. C. & Nembhard, I. M. (2009). Product development and learning in project teams: the challenges are the benefits. Product Innovation Management, 26, 123-138. https://doi.org/10.1111/j.1540-5885.2009.00341.x

Fellows, R. & Liu, A.M. (2017). What does this mean’? Sensemaking in the strategic action field of construction. Construction Management and Economics, 35(8-9), 578-596. https://doi.org/10.1080/01446193.2016.1231409

Ferreira, V., Sotero, L. & Relvas, A.P. (2024). Facing the heat: a descriptive review of the literature on family and community resilience amidst wildfires and climate change. Journal of Family Theory & Review, 16(1), 53-71. https://doi.org/10.1111/jftr.12551

Field, A. (2009). Discovering Statistics Using SPSS. 3rd ed. Sage publications.

Forbis, D.C., Patricola, C.M., Bercos-Hickey, E. & Gallus Jr, W.A. (2024). Mid-century climate change impacts on tornado-producing tropical cyclones. Weather and Climate Extremes, 44, 100684. https://doi.org/10.1016/j.wace.2024.100684

Frohm, C. (2002). Collective competence in an interdisciplinary project context, PhD Thesis. Linköpings University. Sweden.

Garson, G.D. (2009). Factor Analysis from Statnotes: Topics in Multivariate Analysis. Available at http://faculty.chass.ncsu.edu/garson/pa765/statnote.htm .

Gioia, D.A. & hittipeddi, K. (1991). Sensemaking and sense-giving in strategic change initiation. Strategic Management Journal, 12(6), 433-448. https://doi.org/10.1002/smj.4250120604

Global Assessment Report on Disaster Risk Reduction (GAR). (2025). United Nations.

Gray, S.L (2007). A grounded theory study of the phenomenon of collective competence in distributed, interdependent virtual teams, PhD Thesis, University of Phoenix, USA.

Green, R.H. (1993). Calamities and catastrophes: Extending the UN response. Third World Quarterly, 14(1), 31-55. https://doi.org/10.1080/01436599308420312

Greenacre, M., Groenen, P.J., Hastie, T., d’Enza, A.I., Markos, A. & Tuzhilina, E. (2022). Principal component analysis. Nature Reviews Methods Primers, 2(1), 100. https://doi.org/10.1038/s43586-022-00184-w

Guadagnoli, E. & Velicer, W. F. (1988). Relation to sample size to the stability of component patterns. Psychological Bulletin, 103(2), 265. https://doi.org/10.1037/0033-2909.103.2.265

Hair, J.F., Black, W.C., Babin, B.J., Anderson, R.E. & Tatham, R.L. ( 2010). Multivariate data analysis, 7th ed. Pearson. New Jersey.

Hayduk, L., Cummings, G., Boadu, K., Pazderka-Robinson, H. and Boulianne, S., 2007. Testing! testing! one, two, three–Testing the theory in structural equation models!. Personality and Individual Differences, 42(5), pp.841-850. https://doi.org/10.1016/j.paid.2006.10.001

Hu, L. & Bentler, P.M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: conventional criteria versus new alternatives. Structural Equation Modelling, 6 (1), 1-55. https://doi.org/10.1080/10705519909540118

Huybrechts, I., Boeykens, D., Grudniewicz, A., Gray, C.S., De Sutter, A., Pype, P., Van de Velde, D., Boeckxstaens, P. & Anthierens, S. (2023). Exploring readiness for implementing goal-oriented care in primary care using normalization process theory. Primary Health Care Research & Development, 24. https://doi.org/10.1017/S1463423622000767

Iwachido, Y., Kaneko, M. & Sasaki, T. (2024). Mixed coastal forests are less vulnerable to tsunami impacts than monoculture forests. Natural Hazards, 120(2), 1101-1112. https://doi.org/10.1007/s11069-023-06248-8

Júnior, L.C.R., Frederico, G.F. & Costa, M.L.N. (2023). Maturity and resilience in supply chains: a systematic review of the literature. International Journal of Industrial Engineering and Operations Management, 5(1), 1-25. https://doi.org/10.1108/IJIEOM-08-2022-0035

Kanwar, S. & Sharma, P.K. (2024). Overall construction safety and terror management. In AIP Conference Proceedings, 2986(1), AIP Publishing. https://doi.org/10.1063/5.0195565

Khodahemmati, N. & Shahandashti, M. (2020). Diagnosis and quantification of post-disaster construction material cost fluctuations. Natural Hazards Review, 21(3), 04020019. https://doi.org/10.1061/(ASCE)NH.1527-6996.0000381

Kim, J.O. & Mueller, C.W. (1978). Factor analysis: statistical methods and practical issues. sage. https://doi.org/10.4135/9781412984256

Kleemola, A. (2005). Group benchmarking as a model for knowledge creation in supply management context. Tampere University of Technology.

Klein, G., Wiggins, S. & Dominguez, C.O. (2010). Team sensemaking. Theoretical Issues in Ergonomic Science, 11(4), 304-320. https://doi.org/10.1080/14639221003729177

Knight, E., Lok, J., Jarzabkowski, P. & Wenzel, M. (2024). Sensing the room: the role of atmosphere in collective sensemaking. Academy of Management Journal. https://doi.org/10.5465/amj.2021.1389

Lee, C.C., Wang, C.W., Ho, S.J. and Wu, T.P. 2021. The impact of natural disaster on energy consumption: international evidence. Energy Economics, 97, p.105021. https://doi.org/10.1016/j.eneco.2020.105021

Lübcke, T., Steigenberger, N., Wilhelm, H. & Maurer, I. (2025). Multimodal collective sensemaking in extreme contexts: evidence from maritime search and rescue. Journal of Management Studies, 62(3), 1220-1264. https://doi.org/10.1111/joms.13133

Lynn, G.S., Reilly, R.R. & Akgün, A.E. (2000). Knowledge management in new product teams. IEEE Transactions on Engineering Management, 47(2), 221-231. https://doi.org/10.1109/17.846789

Mark, E., Richard, T. & Andy, L. (1991). Management research: an introduction.

Mokline, B. & Ben Abdallah, M.A. (2022). The mechanisms of collective resilience in a crisis context: The case of the ‘COVID-19’crisis. Global Journal of Flexible Systems Management, 23(1), 151-163. https://doi.org/10.1007/s40171-021-00293-7

Moorman, C. (1995). Organizational market information processes. Journal of Marketing Research, 32. 318-335. https://doi.org/10.1177/002224379503200307

Naderifar, M., Goli, H. & Ghaljaie, F. (2017). Snowball sampling: a purposeful method of sampling in qualitative research. Strides in Development of Medical Education, 14(3). https://doi.org/10.5812/sdme.67670

Napitupulu, D., Kadar, J.A. & Jati, R.K. (2017). Validity testing of technology acceptance model based on factor analysis approach. Indonesian Journal of Electrical Engineering and Computer Science, 5(3), 697-704. https://doi.org/10.11591/ijeecs.v5.i3.pp697-704

Neill, S., McKee, D. & Rose, G.M. (2007). Developing the organization’s sensemaking capability. Industrial Marketing Management, 36(6), 731-744. https://doi.org/10.1016/j.indmarman.2006.05.008

Nye, C.D. (2023). Reviewer resources: confirmatory factor analysis. Organizational Research Methods, 26(4), 608-628. https://doi.org/10.1177/10944281221120541

Obreja, D.M. (2024). When stories turn institutional: how TikTok users legitimate the algorithmic sensemaking. Social Media+ Society, 10(1). https://doi.org/10.1177/20563051231224114

Orçan, F. (2018). Exploratory and confirmatory factor analysis: which one to use first. Journal of Measurement and Evaluation in Education and Psychology, 9(4), 414-421. https://doi.org/10.21031/epod.394323

Park, M.H., Lim, J.V. & Philip, H.B. (2009). The effect of multi-knowledge individuals on performance in cross-functional new product development teams. The Journal of Product Innovation Management, 26, 86-96. https://doi.org/10.1111/j.1540-5885.2009.00336.x

Patton, M.Q. (1990). Qualitative evaluation and research methods. SAGE Publications, inc.

Preuss, L., Barkemeyer, R., Arora, B. & Banerjee, S. (2024). Sensemaking along global supply chains: implications for the ability of the MNE to manage sustainability challenges. Journal of International Business Studies, 1-23. https://doi.org/10.1057/s41267-024-00708-4

Pruzek, R. (2005). Factor analysis: exploratory. Encyclopedia of Statistics in Behavioral Science. https://doi.org/10.1002/0470013192.bsa211

Qiu, H., Su, L., Tang, B., Yang, D., Ullah, M., Zhu, Y. & Kamp, U. (2024). The effect of location and geometric properties of landslides caused by rainstorms and earthquakes. Earth Surface Processes and Landforms, 49(7), 2067-2079. https://doi.org/10.1002/esp.5816

Reddy, D.S. (2024). Progress and challenges in developing medical countermeasures for chemical, biological, radiological, and nuclear threat agents. Journal of Pharmacology and Experimental Therapeutics, 388(2), 260-267. https://doi.org/10.1124/jpet.123.002040

Ribeiro, R., Teles, D., Proença, L., Almeida, I. & Soeiro, C. (2024). A typology of rural arsonists: characterising patterns of criminal behaviour. Psychology, Crime & Law, 1-20. https://doi.org/10.1080/1068316X.2025.2564365

Sami Ur Rehman, M., Shafiq, M.T. & Afzal, M. (2022). Impact of COVID-19 on project performance in the UAE construction industry. Journal of Engineering, Design and Technology, 20(1), 245-266. https://doi.org/10.1108/JEDT-12-2020-0481

Saunders, M. N., Lewis, P. & Thornhill, A. (2016). Research methods for business students, 7th ed. Pearson Education, Harlaw, UK.

Sharma, A. & Grant, D. (2011). Narrative, drama and charismatic leadership: the case of Apple’s Steve jobs. Leadership, 7, 3-26. https://doi.org/10.1177/1742715010386777

Sund, K.J. (2024). The Sharing of (Mental) Business Models. In Cognition and Business Models: From Concept to Innovation. Cham: Springer International Publishing. 47-68 https://doi.org/10.1007/978-3-031-51598-9_3

Svennevig, K., Koch, J., Keiding, M. & Luetzenburg, G. (2024). Assessing the impact of climate change on landslides near Vejle, Denmark, using public data. Natural Hazards and Earth System Sciences, 24(6), 897-1911. https://doi.org/10.5194/nhess-24-1897-2024

Talat, A. & Riaz, Z. (2020). An integrated model of team resilience: exploring the roles of team sensemaking, team bricolage and task interdependence. Personnel Review, 49(9), 2007-2033. https://doi.org/10.1108/PR-01-2018-0029

Tavakol, M. & Wetzel, A. (2020). Factor analysis: a means for theory and instrument development in support of construct validity. International Journal of Medical Education, 11, 245. https://doi.org/10.5116/ijme.5f96.0f4a

Tavoletti, E. & Taras, V. (2023). From the periphery to the centre: a bibliometric review of global virtual teams as a new ordinary workplace. Management Research Review, 46(8), 1061-1090. https://doi.org/10.1108/MRR-12-2021-0869

Teichmann, F.M. & Boticiu, S.R. (2024). Adequate responses to cyber-attacks. International Cybersecurity Law Review, 5(2), 337-345. https://doi.org/10.1365/s43439-024-00116-2

Turner, J.R., Allen, J., Hawamdeh, S. & Mastanamma, G. (2023). The multifaceted sensemaking theory: a systematic literature review and content analysis on sensemaking. Systems, 11(3), 145. https://doi.org/10.3390/systems11030145

Varanasi, U.S., Leinonen, T., Sawhney, N., Tikka, M. & Ahsanullah, R. (2023). Collaborative sensemaking in crisis, In Proceedings of the 2023 ACM Designing Interactive Systems Conference, 2537-2550. https://doi.org/10.1145/3563657.3596093

Waller, M.J. & Uitdewilligen, S. (2008). Talking to the room: Collective sensemaking during crisis situations. In Time in organizational research. Routledge. 208-225.

Weick, K.E. (1993). The collapse of sensemaking in organizations: the Mann Gulch disaster. Administrative Science Quarterly, 628-652. https://doi.org/10.2307/2393339

Weick, K.E. (1995). Sensemaking in organizations, Sage.

Weick, K.E. & Roberts, K.H. (1993). Collective mind in organizations: heedful interrelating on flight decks. Administrative Science Quarterly, 357-381. https://doi.org/10.2307/2393372

Weick, K. E., Sutcliffe, K. M., & Obstfeld, D. (2005). Organizing and the process of sensemaking. Organization Science, 14(4), 409-421. https://doi.org/10.1287/orsc.1050.0133

Wilson, A. (2024). Ukraine at war: baseline identity and social construction. Nations and Nationalism, 30(1), 8-17. https://doi.org/10.1111/nana.12986